The Ramsay Filter v2

A Linter for Eliminating AI Slop

Yesterday I published an essay called “You Need to Be More of a Silly Goose.” The argument was about why smart people can’t follow exponential AI progress to its logical conclusion even when they have the data. Part of it is cognitive, because decades of 3% raises and 30-year mortgages wire your brain for straight lines. The other part is social, and harder to fix: saying the wild-sounding conclusion out loud costs you something even when you’re right. So people truncate.

I was proud of it. Sent it out Monday evening.

Ramsay Brown read it a few hours later. Ramsay is a neuroscientist, a Vibe Capital portfolio founder, and a friend. I previously named a “law” after him for detecting when AI-generated writing breaks down. His feedback was six words of compliment and then this: “The truncated pace of sentences is a tell. Reading it feels like reading Lego bricks in braille cause I can tell a current-Gen FM wrote it.”

He was right, and I knew it the moment I reread my draft with his note in my head. I’d used Claude heavily on the piece, and even after multiple editing passes the fingerprint survived. The individual sentences held up fine on their own. What Ramsay heard was the spacing between them: every paragraph hitting the same beats in the same order, every transition landing with identical weight, a metronomic quality that no single sentence revealed but that accumulated across the whole piece into something his trained ear could catch. He identified the specific failure as asyndeton (dropping conjunctions to create staccato rhythm), a pattern AI absorbed from Reddit and reproduces with zero variation. He pointed me to Ezra Pound’s 1913 essay “A Few Don’ts by an Imagiste” as a reference for what good prose avoids.

I’d been collecting detection heuristics for months at that point. “No em-dashes.” “Kill every false binary.” “Explain every technical term in the same sentence.” Useful rules, individually, but I didn’t have a theory for why they all worked. Ramsay’s observation gave me one. A human writer reaches for a fragment because that moment demands urgency, then lets the next sentence sprawl because the thought genuinely needed room. AI doesn’t work that way. It distributes fragments evenly across every paragraph, one per section, same interval, because “punchy” in its training data looks like uniform deployment of a technique rather than a human choosing when to use it.

So I built what I’m calling the Ramsay Filter. Then I ran the filter on itself. It failed its own test, which I probably should have anticipated. The document about avoiding symmetric constructions used symmetric constructions in every section. The section warning against uniform confidence was written with uniform confidence throughout.

What follows is the full filter. I will run it on every draft before publishing. It works on pitch decks and investment memos and blog posts, on anything where you need to figure out whether a human mind shaped the text or whether a very fluent machine produced something that looks like thinking but isn’t.

The Principle Beneath the Rules

AI slop is not a collection of bad habits. It is a single failure mode expressing itself in different ways: uniformity disguised as craft.

A human writer uses a sentence fragment for emphasis and it lands. An AI uses fragments twelve times in one essay and it becomes a fingerprint. A human writer reaches for a binary framing once because it genuinely clarifies, then moves on. An AI reaches for it every time it needs to sound incisive.

The underlying tell is never the technique. It is the evenness of the technique’s application. Human writing has texture because humans get tired, change their minds mid-paragraph, let a sentence run too long because the thought needed room, then cut the next one short because they felt the reader pulling away. AI writing is smooth in a way that real thought never is.

Ezra Pound put it simply in 1913: compose in the sequence of the musical phrase, not in the sequence of a metronome. The rules below are specific instances of metronomic writing. But the meta-rule is: if you can predict the rhythm of the next sentence from the rhythm of the last three, something is wrong.

Level 1: Structural Tells

These are architectural problems. When present, the text must be fundamentally rebuilt.

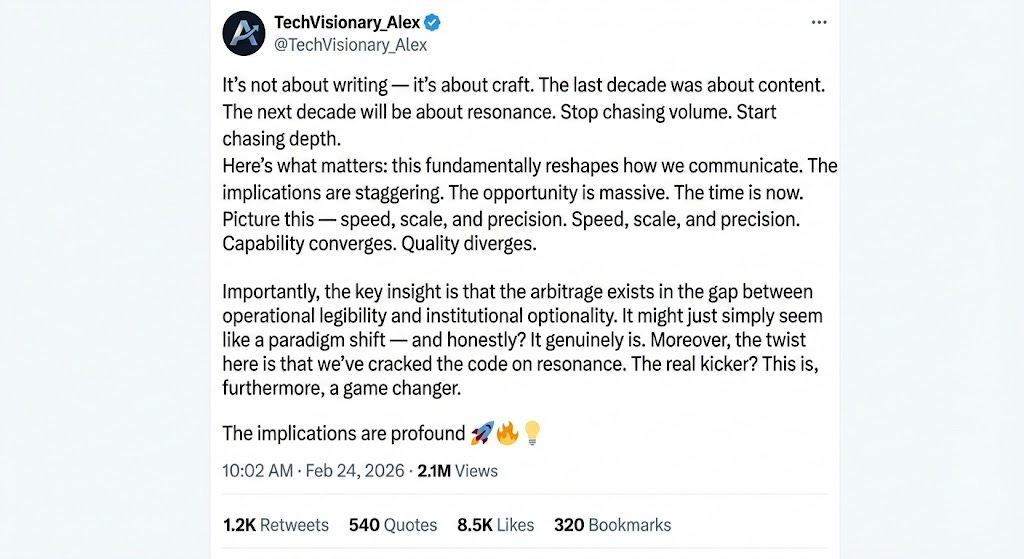

1. The False Binary

A rhetorical shortcut that dismisses one concept to artificially elevate another. “It’s not X, it’s Y.” This creates a clean narrative break that doesn’t exist in reality, where X and Y are usually tangled together, one causing the other, one evolving into the other, both true at the same time.

AI reaches for binaries because they compress messy relationships into clean sentences. That compression is useful for the model (fewer tokens, clearer structure) and terrible for the reader, who now holds a cartoon instead of a map.

What to hunt:

It’s not X, it’s Y

The bottleneck is not X; it is Y

They are not selling X; they are selling Y

It’s no longer X, it is Y

Show the relationship instead. If X is causing Y, say that. If X is evolving into Y, say that. The world doesn’t split cleanly and your sentence shouldn’t pretend it does.

FAIL: “The bottleneck is no longer GPUs; it is gigawatts.”

PASS: “The bottleneck in silicon is now creating a larger bottleneck in the grid.”

2. The Temporal False Binary

The same flattening, spread across time. “The last decade was about X. The next decade will be about Y.” Real history doesn’t work this way. Eras overlap, contradict, and coexist, and the clean break between them is almost always a story someone imposed after the fact because it made a good slide.

What to hunt:

The last decade was about X. The next decade will be about Y.

We are leaving the age of X and entering the age of Y.

X is the old way, Y is the new way.

Describe the actual mechanism of change. Name what’s accelerating, what persists, and where the overlap creates confusion or opportunity.

FAIL: “Defensibility shifts from owning a model to owning the discovery engine.”

PASS: “The most defensible asset becomes the discovery engine itself, potentially commoditizing the individual models it produces.”

3. The Imperative False Binary

The false binary wearing a command as a costume. “Stop doing X. Start doing Y.” It sounds decisive. It creates a false choice and flatters the reader into thinking clarity is the same as insight, which is a particularly dangerous confusion for an audience that makes allocation decisions.

What to hunt:

Stop doing X. Start doing Y.

Stop betting on X. Fund Y.

Forget about X. Focus on Y.

Name where the arbitrage actually is, and why. A specific thesis beats a generic command every time.

FAIL: “Stop betting on apps that describe the world. Fund the founders building the engines that run it.”

PASS: “The new arbitrage is in the interconnection queue. The highest returns will flow to founders who can navigate permitting faster than competitors can train a model.”

4. Abstraction Stacking

Using high-level nouns as if they were objects: “legibility,” “alignment,” “arbitrage,” “agency,” “constraint,” “optionality.” Each word feels like it’s doing work. None of them are. They’re placeholders for thoughts the writer hasn’t finished thinking, and a sentence full of them is a sentence where nothing touches the ground.

AI does this because abstract nouns compress complex ideas into single tokens. The model can chain them together fluently without committing to a specific claim. “The arbitrage in operational legibility creates defensive optionality” is twelve words of nothing. Try saying it to someone at a bar. They’d walk away, and they’d be right to.

The rule: Every abstract noun must touch a doorknob within one sentence. “Doorknob” means a concrete image, a specific example, or a number. If you write “constraint,” the next clause names which one: “the 18-month interconnection queue in PJM West.” If you write “leverage,” you say whose and over what: “a startup with ten engineers delegating to fifty overnight agents.”

FAIL: “The arbitrage exists in the gap between operational capability and institutional readiness.”

PASS: “The company that starts delegating to agents this quarter runs 48 experiments by December. The company writing a readiness assessment runs 16.”

5. Unpriced Claims

Asserting that something matters, changes everything, or creates massive value without naming what breaks, who pays, what gets harder, or how long it takes. Force without cost. AI does this constantly because it has no skin in the game and never has to be right on Tuesday.

This one I’m most confident about, because it maps directly to how my readers think. They invest real money. A claim without a price is a claim they can’t evaluate and will skip. “This will reshape the industry” tells them nothing. “This cuts validation cycles from three months to three weeks, but requires a $50M measurement apparatus that takes two years to build” tells them everything they need to decide whether to take the next meeting.

The rule: Every claim that uses force language (”will transform,” “creates massive,” “reshapes,” “unlocks”) must be followed within two sentences by at least one of: a cost, a bottleneck, a failure mode, or a timeline. If you can’t name one, you probably don’t understand the claim well enough to make it.

FAIL: “Quantum biosensing will revolutionize drug discovery.”

PASS: “Quantum biosensing could cut screening time from weeks to hours. The bottleneck is that only six facilities can package these chips at scale, and they’re booked through 2027.”

Level 2: Rhythmic Tells

These are sentence-level problems. Individually, each technique is fine. The problem is uniform deployment. One of these in an essay is a choice. Five is AI slop.

6. Metronomic Asyndeton

Dropping conjunctions to create a staccato rhythm. “Short phrase. Short phrase. Short phrase.” AI learned this from Reddit, where it reads as punchy and authoritative. In an essay, deployed repeatedly, it reads as a machine that learned one trick for sounding urgent.

Examples:

“In the shower. Late at night. They’ve followed the line.”

“Then learned to reason. Then learned to work fourteen hours.”

“The tightness. The urge to qualify.”

Each instance works in isolation. The first time you use staccato fragments, they hit hard. The sixth time, the reader’s ear catches the pattern even if their conscious mind doesn’t, and the text starts to feel manufactured. Ramsay described the sensation as “reading Lego bricks in braille,” which is the most precise diagnosis of this problem I’ve encountered.

The fix is variation, and it has to be deliberate. Follow a series of fragments with a sentence that takes its time, that lets a thought unspool across two clauses because the idea actually needed that room. Then cut the next one short. The variation is the texture. An essay that’s all fast is a metronome even if every individual beat sounds good.

Practical test: Read three consecutive sentences aloud. If they have the same rhythm, rewrite the middle one longer or shorter. If a paragraph has more than two sentences under eight words in a row, at least one needs to breathe.

7. Symmetric Constructions

Parallel structure deployed as a tic rather than a choice. “X does Y. A does B.” The balanced, mirrored sentence that sounds polished and reveals nothing. AI reaches for parallelism the way a nervous speaker reaches for “um,” not because the thought demands it but because the pattern is available and feels safe.

Examples:

“Capability converges. Operations diverge.”

“The models get smarter. The failures get weirder.”

Use parallel structure once per section, at most. When you catch yourself building a matched pair, break the symmetry. Make one side longer than the other. Add a subordinate clause to the second half that the first half didn’t have. Let the sentence be lopsided the way actual thinking is lopsided, because prose argues, and arguments rarely weigh the same on both sides.

8. The Pileup

Lists of three or more items deployed for rhetorical weight. When a human does this once, it’s emphasis. When the text does it four times, it’s padding. The telltale version is three abstract nouns used as a paragraph closer: “speed, scale, and precision” or “power, optics, and wet labs.”

Hunt for three-item lists used as paragraph closers or section openers, especially when the three items are near-synonyms or don’t represent genuinely distinct ideas.

The fix: if all three items matter, give the most important one its own sentence with a real number in it. If they don’t all matter, cut to the one that does. “Speed” is not insight; “the cycle closed in three weeks instead of three months” is.

Level 3: Texture Tells

These are the subtlest signals, and I’m less certain about some of them. The first two levels are mechanical enough that you can check a draft in ten minutes. This level is more like taste, which means it’s harder to teach and easier to get wrong. But it’s also where the difference lives between writing that passes inspection and writing that a thoughtful reader trusts without quite knowing why.

9. The Absence of Constraint Contact

AI writing is too clean. Every paragraph advances the argument. Every sentence earns its place. Every transition is smooth. Real writing has seams, not because humans are sloppy but because honest thinking encounters limits. The thing you don’t know yet. The tradeoff that stays true even after you pick a side. The place where your argument works for three cases and breaks on the fourth.

AI never hits these limits because it isn’t actually thinking through the implications of what it’s saying. The absolute coherence is the tell.

What to do: Find one place in the draft where you name a specific unresolved variable or tradeoff. Not vague hedging (”there are challenges ahead”). Specific contact with a limit: “This works for protein design where the scoring function is well-defined. Whether it works for small molecules, where the scoring function is almost unmeasured - nobody knows yet.” The reader recognizes the texture of someone who has pushed their own argument hard enough to find where it cracks, and that recognition builds more trust than any amount of confident assertion.

10. The Absence of Speech-Shaped Syntax

AI follows Pound’s “no superfluous word” rule too well. Every word works. Every sentence is lean and complete. But human writers construct sentences the way people actually build thoughts: a clause that starts in one direction, then a subordinate clause that qualifies it because the writer realized mid-sentence that the first clause wasn’t quite right on its own. Not just filler words sprinkled for warmth, actual syntactic shapes that only appear when someone is thinking in real time.

What to do: Read the draft aloud. Where every sentence is a clean subject-verb-object declaration, find the one that should have been two clauses joined by “because” or “which means” or “though,” because sometimes a thought genuinely needs that extra room and compressing it into a single declaration makes it sound like a fortune cookie. The target is the voice of someone explaining something they care about to someone they respect.

11. Uniform Confidence

AI maintains the same level of assertion throughout a piece. Every claim lands with equal force. Human writers modulate, and the modulation carries information. They’re more tentative on uncertain ground and more forceful when they know they’re right. They’ll hedge in the middle of a paragraph and then commit hard at the end, because that’s what it feels like to work through a thought and arrive somewhere.

What to do: Find the claim you’re most sure of and the claim you’re least sure of. Make sure the reader can tell the difference from tone alone. You don’t need to flag uncertainty with “I’m not sure about this.” You need the rhythm and word choice to be visibly different. That unevenness is the texture of real thought, and its absence is one of the reasons AI prose feels flat even when every individual sentence is strong.

Quick Reference

Handy-dandy “Kill List”. Everything above, compressed into a checklist you can tape to your monitor.

Structure (rebuild if present):

“It’s not X, it’s Y” and all variants

“The last decade / the next decade”

“Stop doing X. Start doing Y.”

Abstract nouns without a doorknob (concrete image, specific example, or number) within one sentence

Force claims (”will transform,” “reshapes,” “unlocks”) without a cost, bottleneck, failure mode, or timeline within two sentences

Rhythm (break the pattern if repeated):

More than two consecutive sentences under eight words

Parallel constructions (”X does Y. A does B.”) used more than once per section

Three-item lists used as rhetorical closers more than once per piece

Texture (audit before publishing):

Is confidence level uniform throughout, or does it vary with the strength of the underlying evidence?

Is there at least one place where you name a specific limit, unknown, or tradeoff?

Does at least one sentence have speech-shaped complexity (subordination, mid-sentence turns, a clause that earns its length)?

Read aloud: does it sound like one person thinking, or like organized content?

The Nuclear Option: Read the piece to someone. If they say “this is really well-written” instead of engaging with the argument, it’s too clean. Rewrite until they argue with you about the content and forget to comment on the prose.

The Meta-Rule

Every item on this list is a specific instance of the same underlying problem: AI distributes techniques with robotic evenness. Humans cluster them with chaotic randomness.

AI spreads fragments evenly across every paragraph. It places one binary per section. It deploys parallel structure at regular intervals. The spacing is metronomic even when the individual notes are correct, and that’s what Ramsay was hearing when he said the post read like Lego bricks in braille. Not that any single brick was “wrong”; that they were all the same size, snapped together at the same interval, producing a surface that was technically precise and completely dead to the touch.

A human writer will use four fragments in a row because that moment demands urgency, then write a 40-word sentence because the next thought is complex, then drop a one-word paragraph because they want the reader to stop and feel something. The distribution is lumpy, and the lumpiness is the music.

The final test: read the whole piece aloud. Does it sound like one person thinking, with all the unevenness and self-correction and variable energy that implies? Or does it sound like a well-organized system producing content? If the latter, the fix is clustering. One paragraph gets to be ragged and urgent. The next one takes its time, lets a thought run long because it needed the room. Then the third goes precise and cold.

Variation across paragraphs is what makes a reader trust that there’s a mind behind the text.

Or, as Ramsay put it: spend thirty minutes smearing your human grease on it until it’s blurry and emotional and wrong. Which makes it right.

Love this back and forth with a goal of improvement. (My comment is not AI)